Containerized DSS engine¶

While visual recipes are best run on Spark or in SQL databases, some setups don’t have access to compute resources outside the DSS node, some recipe instances have inputs or outputs that don’t let the recipe offload the compute externally, and some recipes just don’t manipulate enough data to warrant the use of Spark. In those cases the recipes will run with the engine named “DSS”, implying that the DSS node will be the one providing the compute resources. This can lead to over-consumption of CPU and memory on the DSS node.

If the DSS node can leverage a Kubernetes cluster, then most of these recipes can be pushed to run inside the Kubernetes cluster instead of on the DSS node, thereby freeing CPU and memory to maintain a reactive DSS UI. The feature is activated in Administration > Settings > Containerized Execution , in the Containerized visual recipes section.

Setup¶

The prerequisites are the same as for containerization of code recipes.

Build the base image¶

Before you can deploy to Kubernetes, at least one “base image” must be constructed.

Warning

After each upgrade of DSS, you must rebuild all base images

To build the base image, run the following command from the DSS data directory:

./bin/dssadmin build-base-image --type cde

On cloud stacks provisioned instances, this is handled by the setup action Setup Kubernetes and Spark-on-Kubernetes

Instance-specific image¶

For containerized visual recipes to be able to leverage custom Java libraries, custom JDBC drivers or plugin components, such as custom datasets or custom processors, a specific image must be built on top of the base image.

For cloud stacks provisioned instance, we recommend to use the following Setup Action:

Type: Run ansible Task

Stage: After DSS is started

Ansible Task:

---

- name: Build image

become: true

become_user: "dataiku"

command: "{{dataiku.dss.datadir}}/bin/dssadmin build-cde-plugins-image"

- name: Push image

become: true

become_user: "dataiku"

command: "{{dataiku.dss.datadir}}/bin/dsscli container-exec-base-images-push"

For on-premise installation, you can perform the same task using the button Build image for Containerized Visual Recipes in Administration > Settings > Containerized Execution

Plugins¶

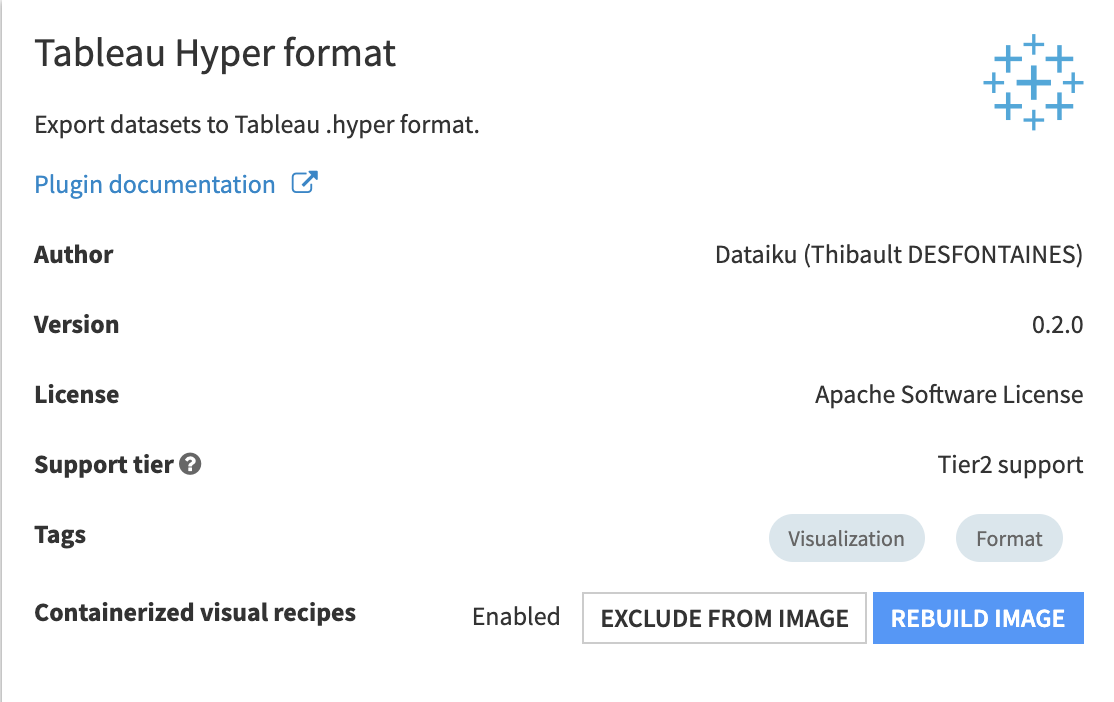

By default, plugins which DSS deems of interest for visual recipes are added to the image automatically, when plugins are installed or updated. This auto-rebuilding behavior can be turned of in Administration > Settings > Containerized Execution in the Containerized visual recipes section. You can also choose on a per-plugin basis whether the plugin is included in the base image by going to the plugin’s summary page and using the “Exclude from image” / “Include in image” button.

Note

By default, development plugins are not auto-rebuilt.

Containerized execution configs¶

Container configs hold the settings controlling pods’ sizes and namespaces, and what kind of workload they accept in DSS. To use containerized visual recipes, some of them need to have their Workload type set to “Any workload type” (the default) or “Visual recipes”. Container configs with a “User code (Python, R, …)” workload type are not accepted by visual recipes.

You can set a container config to use by default for all visual recipes on the instance in Administration > Settings > Containerized Execution in the Default container execution configuration section. This setting can be overridden in each project and each individual recipe.

Running a visual recipe in a container¶

For a given visual recipe, if the engine chosen is “DSS”, then the option to run in a container is controllable from its Advanced tab, by picking a Container configuration (or leave it to the instance- or project-level default setting). When a container config is set, then the engine label should change to “DSS (containerized)” to indicate that the recipe is expected to be run in a container. If it changes to “DSS (local)”, then it means that the characteristics of the recipe, or of its inputs or outputs, make it unsuitable to containerization. Notable cases where this happens are:

a Prepare recipe uses a “Python Function” processor

a recipe with input or output on some dataset types: internal datasets (metrics, stats), Hive, SCP, SFTP or Cassandra datasets

a recipe with input or output datasets on connections with authentication modes relying on the DSS VM, such as those using kerberos or instance profiles on AWS

a recipe with input or output datasets on connections only accessible locally, like SQL connections to a database on localhost

Note

To flag a connection as not-to-be-used-in-containerized-recipes, add a property in its Advanced connection properties, with name “cde.compatible” and value “false”

Note

For connection targeting a service running on the same VM as DSS, it is often possible to use the internal IP address instead of “localhost”.

Tuning¶

Containerized visual recipes are subject to the limits enforced on the container config they are run with. While containerization allows to pull compute resources from nodes other than the DSS node, these resources are usually not infinite. Memory usage, in particular, can be excessive w.r.t. the pod’s limits, or w.r.t. the node running the pod. Additionally, the containerized visual recipe being run as a Java process, it’s subject to the “Xmx” command line flag, which controls the maximum memory it’s allowed to grab from the container. To control memory usage inside the container, there are 2 options:

add a “dku.cde.xmx” property in the

Custom propertiesof the container config. The value is a standard “Xmx” value, like “4g” (for 4GB)set a “Memory limit” on the container config and add a “dku.cde.memoryOverheadFactor” property in the

Custom propertiesof the container config. The correct value of “Xmx” will then be deduced so that “Xmx + overheadFactor * Xmx” fits withing the memory limit of the container config

Additionally, it is advised to add a “CPU request” on the container config so that the pod immediately has CPU resources at its disposal, because Java processes tend to have a CPU usage spike when they start and load all the classes, for example ensuring half a core right from the beginning.

Pipelines¶

When using a chain of visual recipes, DSS executes each recipe independently. For example, if you have a grouping recipe followed by a prepare recipe then a join recipe then a grouping recipe, and so forth, each recipe will be executed in sequence, and the DSS engine will read and write the input and output datasets in each recipe.

CDE pipelines are a Dataiku capability that combine several consecutive recipes in a DSS workflow, provided each recipe is using the Containerized DSS engine. These combined recipes then run as a single job activity, and can skip writing intermediate datasets.

Using a CDE pipeline can strongly boost performance by avoiding unnecessary writes and reads of intermediate datasets. CDE pipelines also allow you to optimize the data storage capacity without having to manually re-factor the Dataiku flow (for example, by reducing the number of datasets).

Using CDE pipelines¶

CDE pipelines are not enabled by default in DSS but can be enabled on a per-project basis.

Go to the project Settings

Go to Pipelines

Select Enable CDE pipelines

Save settings

When you run a build job, the Dataiku Flow dependencies engine automatically detects if there are CDE pipelines based on the settings of the datasets and recipes. The engine then creates separate job activities for each of them.

The details of the CDE pipelines that have run can be visualized in the job results page. On the left part of the screen, “CDE pipeline (xx activities)” will appear and mention how many recipes or recipe executions were merged together.

Configuring behavior for intermediate datasets¶

A CDE pipeline covers one or more intermediate datasets that are part of the pipeline. For each of these intermediate datasets, you can configure whether it is virtualized or not.

When a dataset is virtualized, Dataiku will not write it while executing the recipe, and instead merely cache it locally in the container.

If the dataset is not useful by itself, but is only required as an intermediate step to feed recipes down the Flow, virtualization improves performance by preventing DSS from writing the data of this intermediate dataset when executing the CDE pipeline.

Some intermediate datasets are however useful by themselves. For example, if the dataset is used by charts, enabling virtualization can prevent DSS from creating required charts, as the data needed to create the charts would not be available.

To enable virtualization for a dataset:

Open the dataset and go to the Settings tab at the top of the page

Go to the Advanced tab

Check “Virtualizable in build”

You can also enable virtualization for one or more datasets at once by performing these steps:

Select one or more datasets in the Flow

Locate the “Other actions” section in the right panel and select Allow build virtualization (for pipelines)

Configuring behavior for recipes¶

A CDE pipeline covers multiple recipes, and you can configure the behavior of the pipeline for each recipe.

Open the recipe and go to the Advanced tab at the top of the page

Check the options for “Pipelining”:

“Can this recipe be merged in an existing recipes pipeline?”

“Can this recipe be the target of a recipes pipeline?”

The first setting determines whether a recipe can be concatenated inside an existing CDE pipeline. The second setting determines whether running the recipe can trigger a new CDE pipeline.

Pipeline execution settings¶

Inside the container, all recipes of the pipeline are executed, and intermediate datasets cached if they are virtualized. This can be quite taxing on the container so there are a few settings to control resource consumption in the container. These settings are specified as Custom properties on the container configuration used for the CDE engine.

cde.pipeline.activityParallelism (number) : controls how many recipes of the pipeline can be running concurrently inside the container, flow topology permitting. Higher values can make the container run many recipes in parallel in the same JVM, possibly causing memory exhaustion.

cde.pipeline.virtualized.compression (empty, or “gz” or “lz4”; default “lz4”) : when intermediate datasets are virtualized, the CDE pipeline will cache them as files inside the container. To limit disk space consumption inside the container, these files are compressed.

cde.pipeline.virtualized.verificationOnWrite (true or false; default true) : when intermediate datasets are virtualized, the CDE pipeline will cache them as files inside the container. These files store data produced by the recipe of which the intermediate dataset is an output. Some recipes pass the data as-is from their input, without making sure they conform to the data type of the column. This setting controls whether the data is verified before being written out to the cached file. This verification incurs a cost in CPU cycles and can be disabled if you are confident that the data is correct.

cde.pipeline.virtualized.verificationOnRead (true or false; default false) : when intermediate datasets are virtualized, the CDE pipeline will cache them as files inside the container. This setting controls whether the conformity of the data to the schema of the intermediate dataset is verified when reading the cached data. When cde.pipeline.virtualized.verificationOnWrite is true, the data should already be correct and this setting can be left to false.