Usage of Spark in DSS¶

When Spark support is enabled in DSS, a large number of components feature additional options to run jobs on Spark.

SparkSQL recipes¶

SparkSQL recipes globally work like SQL Recipes but are not limited to SQL datasets. DSS will fetch the data and pass it on to Spark.

You can set the Spark configuration in the Advanced tab.

See SparkSQL recipes

Visual recipes¶

You can run Preparation and some Visual Recipes on Spark. To do so, select Spark as the execution engine and select the appropriate Spark configuration.

For each visual recipe that supports a Spark engine, you can select the engine under the “Run” button in the recipe’s main tab, and set the Spark configuration in the “Advanced” tab.

All visual data-transformation recipes support running on Spark, including:

Prepare

Sync

Sample / Filter

Group

Distinct

Join

Pivot

Sort

Split

Top N

Window

Stack

Falling back to DSS execution for small datasets¶

Visual recipes may auto-select Spark when it is enabled. Yet Spark may not always be the best choice, especially for small, non-partitioned datasets. Fortunately, this behavior can be overridden.

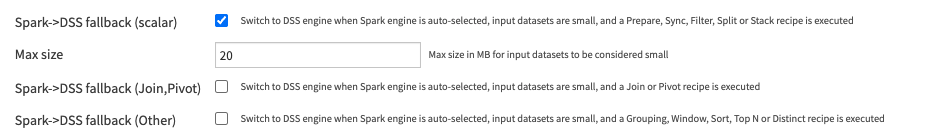

The Recipe Engines section in “Administration > Settings > Engines & Connections”, or at the project level in “Settings > Engines & Connections”, can be used to control which recipes and dataset sizes use DSS instead of Spark as the default engine choice.

Fallback from Spark to DSS for small datasets is disabled for installations predating Dataiku 14.5. It is enabled for later installations, for all visual recipes except Sync, Pivot and Join, with a dataset size threshold of 20MB.

Python code¶

You can write Spark code using Python:

In a Pyspark recipe

In a Python notebook

Note about Spark code in Python notebooks¶

All Python notebooks use the same named Spark configuration. See Spark configurations for more information about named Spark configurations.

When you change the named Spark configuration used by notebooks, you need to restart DSS afterwards.

R code¶

Warning

Tier 2 support: Support for SparkR and sparklyr is covered by Tier 2 support

You can write Spark code using R:

In a Spark R recipe

In a R notebook

Both the recipe and the notebook support two different APIs for accessing Spark:

The “SparkR” API, i.e. the native API bundled with Spark

The “sparklyr” API

Note about Spark code in R notebooks¶

All R notebooks use the same named Spark configuration. See Spark configurations for more information about named Spark configurations.

When you change the named Spark configuration used by notebooks, you need to restart DSS afterwards.

Scala code¶

You can use Scala, Spark’s native language, to implement your custom logic. The Spark configuration is set in the recipe’s Advanced tab.

Interaction with DSS datasets is provided through a dedicated DSS Spark API, that makes it easy to read and write SparkSQL dataframes from datasets.

Warning

The Spark-Scala notebook is deprecated and will soon be removed

Machine Learning with MLLib - Deprecated¶

See the dedicated MLLib page.